-

August 29, 2025

Just a couple of years ago, renaming a “Submit Now” button would have involved running an entire regression suite. Testers would deal with broken, brittle scripts, dashboards already running alerts, and complex XPath expressions.

Today, the process is infinitely easier and takes less than half the time. Why?

AI Tools for Software Testing

AI capabilities transform workflows across modern QA orgs. In fact:

- 68% of organizations are either actively utilizing Gen AI (34%) or have developed roadmaps following successful pilot implementations (34%).

- 72% of respondents reported faster automation processes as a result of Gen AI integration.

Another 2024 developer survey shows:

- 76% of all respondents are using or are planning to use AI tools in their development process in 2024.

- A greater number of developers are currently using AI tools this year (62% vs. 44%).

This article will discuss the popularity of AI tools by analyzing how they supercharge QA operations. AI engines bring such tangible advantages to QA and business pipelines that the lack of adoption can only lead to losing out on a competitive advantage. This technology reduces work for testers and delivers long-term value.

Here’s how.

Why QA Teams are Switching to AI tools for Software Testing

AI agents act as autonomous entities, capable of ingesting reams of historical/relevant data and making technical decisions. It’s like having a highly knowledgeable and trained assistant by your side, predicting your needs and surfacing deep insights continuously.

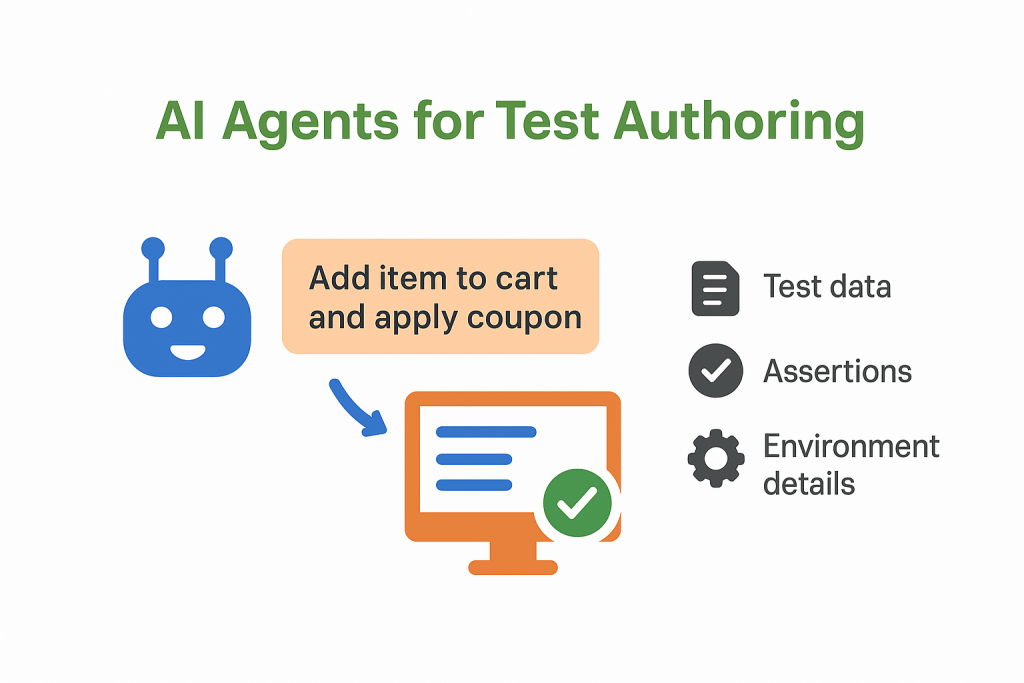

AI Agents for Test Authoring

AI agents, such as the one powering TestWheel’s automation capabilities, convert user stories, requirements, and notes into executable test cases. Testers can leave commands in natural language. The agent translates the commands into runnable steps with test data, assertions, and environment details.

The LLM, already trained on domain information studies user intent — “add item to cart and apply coupon”, for example. It maps the requirement to UI/API interactions, inserts stable locators, and smart waits. It can also automatically generate scripts for Selenium, Playwright, Cypress, and other frameworks.By virtue of AI in software testing, the time taken to author scripts drops significantly. Test coverage expands; more tests in less time. No more boilerplate tests, as AI agents can craft precise test cases mapped to specific intent.

AI Agents for Self-Help & Assisted Testing

In this role, AI agents run like QA copilots. For instance, it answers “how do I…?” questions, recommends assertions, analyzes failed tests, and suggests actionable. It can generate synthetic data, rerun tests with diagnostics, log defects with full context, etc.

Agentic AI software testing pulls from the organization’s test run history, test cases, logs, and documentation. It proposes the next steps or runs them with human approval.

The practice lowers context switching and reduces MTTD/MTTR for test failures.

The potential for value is enormous, as declared by Capgemini, “AI agents are poised to deliver up to $450 billion in economic value by 2028 through revenue gains and cost savings.”

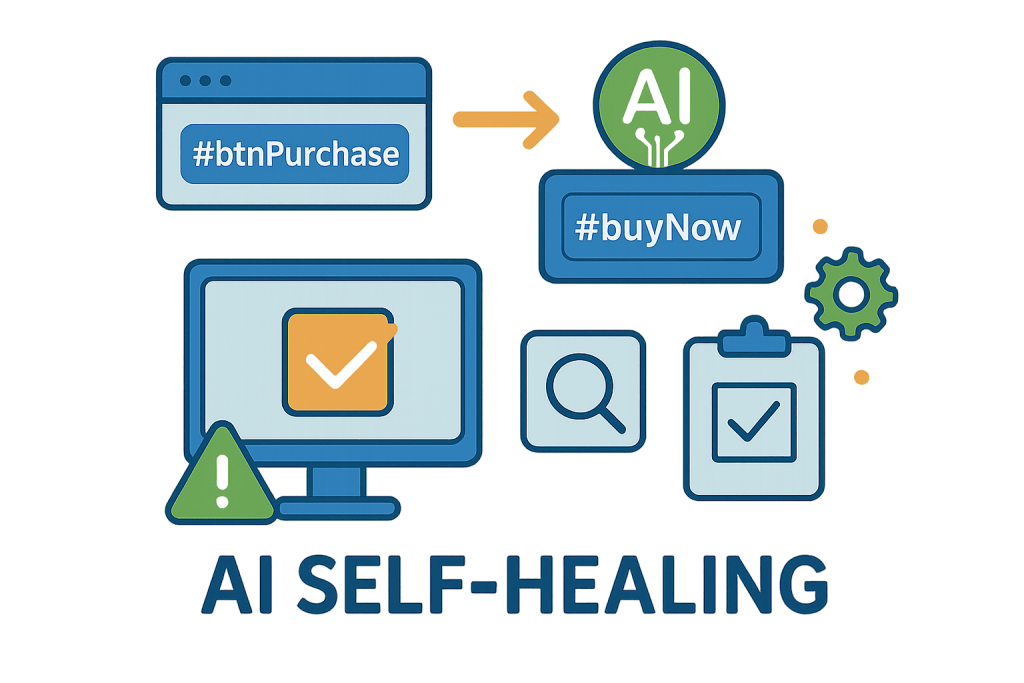

AI Self-Healing for Tests After UI Updates

When powered and maintained by AI engines, test pipelines can adjust scripts with every UI change. Whenever a UI element changes, AI automatically updates the locator and/or test step. It also automatically reruns the script for validation, instead of failing the suite.

TestWheel will, for instance, capture a semantic fingerprint of elements (attributes + text + role + relative position) and add computer-vision anchors. Let’s say, #btnPurchase becomes #buyNow . Then, the engine triangulates using backup selectors and historical context, patches the step, and learns from the fix.

Self-healing goes a long way in preventing flaky tests. By adapting test scripts to code changes, tests can keep running smoothly without much need for human modification. Testers only need to worry about final approvals and serious anomalies/emergencies.

AI Agents for Converting Test Cases into Codeless Flows

Low-code test automation tools like TestWheel use AI to import manual test cases or even Selenium scripts. The platform’s parsing engines convert code or Excel-based test cases into runnable tests. As a result, QA engineers don’t have to build tests from scratch in order to rewrite tests from scratch.

At the backend, parsing engines create an AST from Selenium/JUnit/TestNG or structured manuals. The LLM stabilizes test steps, finds relevant data oracles, and rewrites tests into codeless/low-code flows with stable locators.

This ability helps preserve organizations’ knowledge bases and acts as a serious contender for the title of “reliable Selenium alternative”.

The Business Case for Agentic AI Software Testing

Agentic AI is quickly becoming key to helping high-velocity teams ship software safely. It reduces time and effort needed to author tests, maintain datasets & suites, lower flakiness, and prioritize the right tests to re-run.

Agents can also find and highlight more bugs and insights, contributing to quick and effective triage.

AI can also democratize access to knowledge and skills, facilitating higher productivity for humans and machines. IDC predicts that by 2028, 80% of foundation models used for production-grade use cases will include multimodal AI capabilities — exactly what AI-powered testing solutions like TestWheel are aiming for.

Faster Authoring, Richer Coverage

AI agents convert user stories into functional tests, ready for execution. They propose assertions/data and import test cases from external sources like Excel sheets or Selenium scripts. All this, in half the time as manual testers.

AI can create more tests in short durations, providing wider coverage. Testers get more done in less time, with less effort. This translates to far superior ROI in the short and long term.

Low Flakiness and Self-Healing UI Tests

AI agents can calibrate tests to match changes in UI and underlying code. Agentic systems automatically heal locators, cluster failures, and likely flaky tests. The systems quarantine these tests, fixing them or eliminating them. This increases trust in the test flows, lowers regression time, and prevents delayed merges as well as slipped releases.

Predictive Test Selection (PTS) to Lower Cost and Lead Time

PTS reduces infrastructure cost by selecting tests most likely to fail and rerunning them alone. No bugs escape, and yet test cycles end faster.

AI Agents Explain, Act, and Learn

AI agents can study test runs and historic logs. It can then use this information to rerun failed tests with diagnostic insights. They can also create minimal repros (minimal reproducible example) faster, and file bugs with the right screenshots and logs.

Naturally, the models grow more intelligent with time and repeated execution, sharpening its ability to predict, build, execute and analyze.

Humans are still in charge of reviewing and approving AI activity, so no jobs are being replaced. Just more efficiency and cost savings.

How TestWheel is Poised to Transform Software Testing with AI

TestWheel combines all the aforementioned AI-operated capabilities — agentic test creation, self-healing, intelligent test selection, continuous learning — into a single coherent workflow.

Here’s how it actually works in QA teams’ day-to-day routines:

Unified AI-Enhanced Runtime

TestWheel implements event-driven pipelines, assisted by LLMs. Every run, log, trace, DOM snapshot, and screenshot is accurately slotted into a versioned storage location. Augmented models utilize this data, and a policy engine is configured to determine the exact usage of said data (suggestions vs. auto-execution, for eg.).

TestWheel’s agentic AI software testing also retains full audit trails, enables routine fixes, and gatekeeps high-impact changes with human approval.

Easy Test Creation

TestWheel’s AI model-backed protocols can be configured with precise acceptance criteria for user stories, so that it can quickly build executable test scripts across web, mobile and API.

On receiving a user story/Excel file, the LLM aligns the test goal with UI/API interactions, locators, relevant data, and so on.

It builds selector graphs while considering accessibility roles, text intent, attributes, relative layout, and historical matches. This enhances test resilience.

Export tests to code, or run them directly in TestWheel’s automation flow. Ideal for teams looking for a Selenium alternative or transitioning from legacy stacks.

Comprehensive Assistance with Teaching and Self-Help

TestWheel’s AI capabilities inspect traces, console logs, network calls, and HAR files on every test failure. It can rerun tests with tracing and diagnostics, throttle networks for specific tests, and capture artifacts automatically.

Test results will map test failures to exact steps, the DOM stage, and API payloads. Testers can approve actions like rerunning tests, quarantining them, or opening a Jira ticket, and the platform will take over.

These extensive reports are also excellent materials to onboard new testers or train new company joiners.

Instant Self-Healing

TestWheel detects selector drifts due to UI changes and repairs tests without manual intervention.

For example, the platform won’t bother with a changed button ID if role/text/position similarities stay high. AI will update the selector graph, document the patch, and rerun tests. It keeps automated testing quiet, explainable, and reversible.

Easy Test Case Conversion

TestWheel can create tests from Selenium (Java/Python), Cypress, Playwright, and structured manual cases. AI engines will parse steps and assertions from your script into TestWheel’s no-code execution funnels.

QAs can see original vs. converted steps before approving the latter.

TestWheel allows QAs to surgically define intent, rather than just picking from boilerplate scenarios. It reduces manual effort, shrinks maintenance drag and keeps everything subject to human approval. All this while running no code flows from scratch.